Generally we are only interested in web requests done by humans and not by request done by bots but HTTP logs generated by an IIS web server or the access logs generated by an Apache web server will show you both. So how do we remove log entries generated by bots from the analysis?

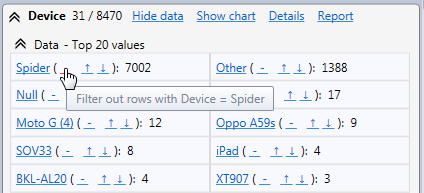

We can use the result of the parsing of the user agent string. A field Device is extracted from it and there is a specific value Spider for well-known bots. So we need to exclude this value from the view.

Resulting in the following filter query

NOT Device='Spider'

![]()

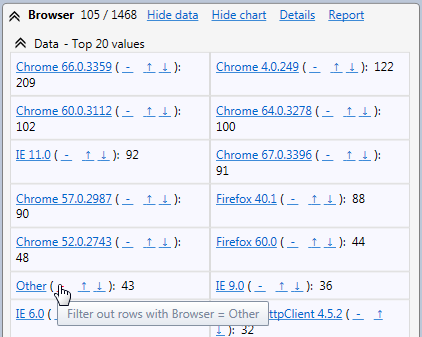

But this may not be enough. Some spiders may not be well known and in consequence will not be excluded by this filter.

To improve this, we can use the fact that most internet users use a well-known browser to access web sites. Any request from an unknown browser is more probably something else like a bot. So we need to also exclude all log rows with Browser = 'Other'

Leading to the following query:

NOT Device='Spider' AND NOT Browser='Other'

This is probably not perfect because some bots may hide under the user agent string from a well-known browser. Sometimes you can still improve a little by removing old browser versions that could be an old user agent string never updated in the code of a bot. Internet users generally update their browser or the browser is automatically upgraded to keep the web browsing working and safe.

Even after that you may still have bots hiding behind a legitimate browser user agent string but if your web site has enough audience it should be negligible.